DNS in a Distributed Homelab: Control, Constraints, and What Actually Works

As internal services evolve and automation becomes more central, one challenge keeps resurfacing: DNS management.

Keeping track of dynamically allocated IPs, ephemeral services, and changing infrastructure does not get easier over time—it becomes a source of increasing complexity.

To address parts of this, I’ve used and tested out:

These help structure inventory, but they don’t fully solve the problem of how DNS should behave in a dynamic, distributed environment.

The Context: A Distributed Setup That Grew Organically

I run a shared homelab/data center setup across multiple physical locations together with friends. As the system has grown, so has the complexity around:

- IP range management (private rangs)

- Routing (VPN)

- DNS resolution (internal and external services)

Services move, scale, and get redeployed. Once you start down the rabbithole; Static assumptions break down quickly.

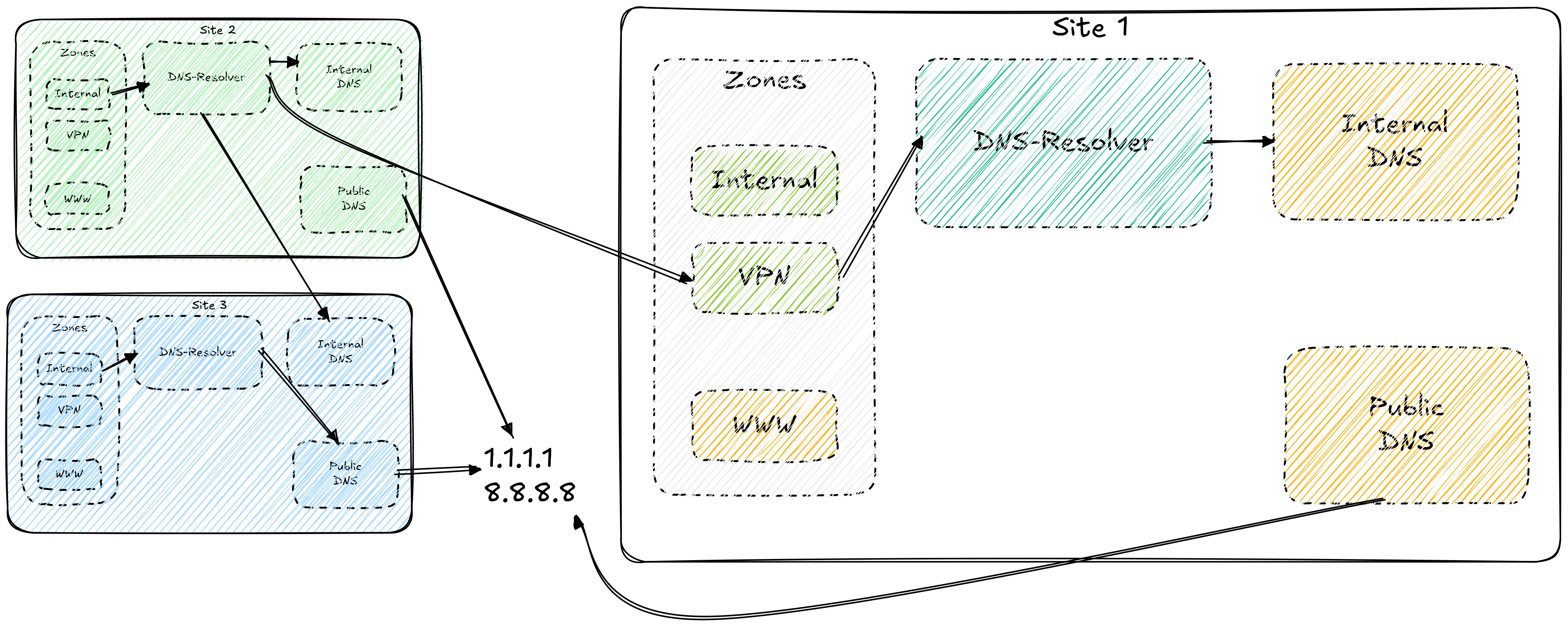

The Architecture: Simple, Distributed, and Actually Working

We’ve converged on a solution that is both simple and robust—and importantly: it works in practice.

At the core is BIND, used to build a layered DNS structure:

Key principles:

- Each location runs its own local DNS resolver

- Resolvers point to secondary resolvers at other sites

- Data and resolution capability are distributed across locations

Each resolver then forwards queries to internal DNS servers that:

- Serve local IP ranges via internal zones

- Expose services through both internal and external URLs

- Enforce allow-lists for what can be resolved

DNS as a Control Plane

A central idea in this setup is:

DNS is not just a lookup service—it is a control layer

- We log every request, and a "new request" wil light up as a flare in the log-rigg.

Instead of allowing unrestricted resolution:

- Unknown or non-approved queries are redirected to an internal “catch-all”

- This catch-all acts as a controlled boundary

From there, a deliberate “break glass” mechanism is available:

- Authentication (username/password)

- Temporary “resolve-all” access (e.g. during installation)

- Or permanent allow-listing of specific domains

This introduces a balance between:

- Security

- Operational control

- Flexibility when needed

Why This Matters

Letting everything resolve freely may feel simpler—but it quickly leads to:

- Loss of visibility

- Increased attack surface

- Harder debugging

- Unpredictable behavior across environments

By constraining DNS and making access explicit, the system becomes:

- More predictable

- Easier to reason about

- Easier to operate over time

The Trade-off: Friction vs Control

Yes — this introduces friction.

But it’s intentional friction., then againg most of our systms have no need to resole extrenal services. Except during update, bursts "nothing that cant be solved using a cron job"

We trade a bit of convenience for:

- traceability: We log behavior to neo4j, and all new entries light up, and are evaluated.

- reproducibility: When we redeploy a system we know what they are suppose to talk to, and can preconfigure this in during the instrumentation prosess Ansibel

- clearer understanding of what your systems are actually doing

Open Questions

Even though this setup works well, there are still open areas worth exploring:

- We have still not reached a final plan on how to model inventory (IPs, services, DNS) consistently?

- Where is the right boundary between automation and manual control?

- Should DNS evolve into a policy engine—or stay a pure resolver?

- How much dynamism can DNS realistically support before abstraction is required?